The Fixation on Fixing

Often, as technically-minded folk, we have an urge to fix things for others. I call this the "see a problem - solve a problem" trap.

Let me fix that for you.

-- Well meaning people everywhere

Often, as technically-minded folk, we have an urge to fix things for others. This naturally extends to our work - when we hear of a problem with our product, our minds go straight to the logical cause, and how we can fix the problem and save the day. I call this the "see a problem - solve a problem" trap. The longer this goes on, the more we create a dependance on ourselves, and the further we distance ourselves from the problems we set out to solve.

We create a dependance on ourselves when we fail to address the human part of the cause. That ultimately, the reason we're being called upon to save the day is that what we've created has led someone to a deadend. We know we can save the day, because we have the necessary insight to unblock them. But in doing so we guarantee that we'll be called upon to do it again. If instead, we resist the urge to "see a problem - solve a problem", then we might see that the fault lies not in our user's usage, but in the product's presentation. If we focus on improving the product rather than unblocking the user, we might sacrifice the glory that comes from saving the day. But we also create the opportunity to extend our impact beyond the day, potentially diverting many other users from reaching the same deadend.

The "see a problem - solve a problem" trap also distances us from important problems, by narrowing our focus to the problems our product created rather than those it set out to solve. Sometimes this is important - certainly we want to seek to improve our product and reduce the problems it causes. But the returns diminish quickly. It doesn't take long for product improvements to be at odds with the product's purpose. Before you know it, all your efforts are spent on ensuring the product works, rather than on ensuring the product solves a problem. As long as the money doesn't run out first, what you end up with is a very slick solutions to problems that don't exist. Money will prop up manufactured demand for a while, but that's hardly the way to fulfilment.

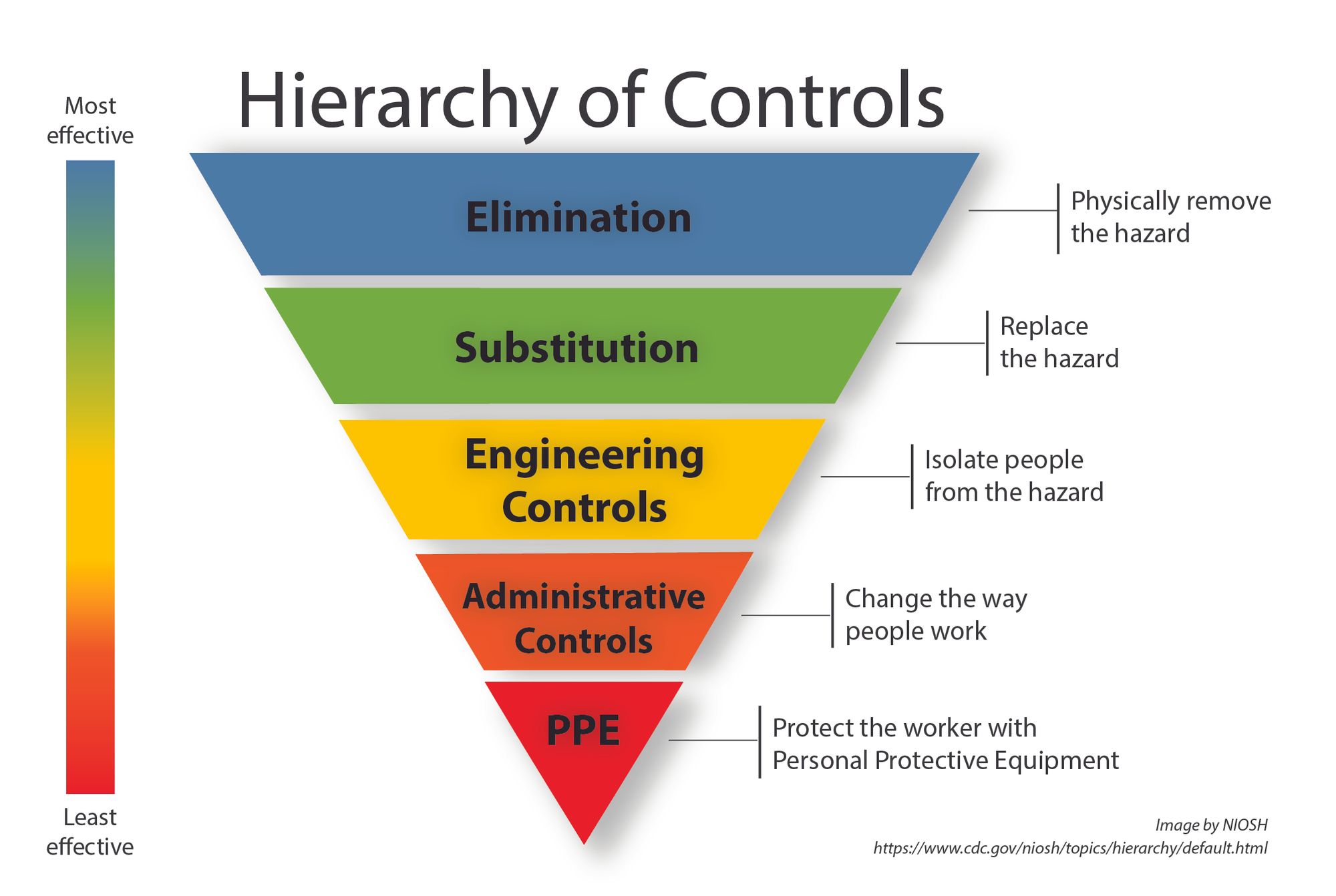

In fields where lives are at stake, rather than just competitive advantage, there tends to be guardrails to prevent this sort of natural navel-gazing. One of my favourites is the Hierarchy of Controls. It provides a step-by-step approach to dealing with risks, that controls for the "see a problem - solve a problem" trap that occurs at all levels. Instead of mitigating a risk using the solution that first springs to mind, the Hierarchy of Controls places precedence on solutions that are more likely to ensure the problem is gone, not just hidden. It's the workplace equivalent of the "prevention is better than cure" advice.

That "engineering controls" appears halfway down the list of ways to prevent harm is a healthy lesson for all engineers.

So next time you have the opportunity to save the day with some engineering brilliance, stop. Step back, zoom out, and consider what caused the issue in the first place.

Focus not on the instance of the problem, but the essence of the problem.

If a user of your product came to grief, resist the urge to explain what they did wrong and fix it for them. Not only does this make users feel like they're the problem, it guarantees that others will run aground on the same rock.

Consider instead that your product may have instigated harm, and you've just been presented with an opportunity to prevent it happening to others. Believe your users - your product, after all, is for them. If appropriate, validate their experience - a conversation with your users is a precious resource and the channels for it are fragile. And finally, keep your cleverness in check and aim for usefulness instead. Sometimes, as brilliant as it may be, your solution does not need to be fixed. Sometimes it needs to be eliminated.